In research published this week in Cerebral Cortex, DRCMR researchers were able to decode which sound a person is listening to by analysing his or her brain activity patterns.

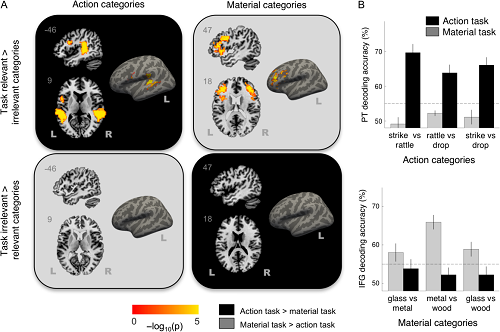

During functional MRI scanning, participants in the study were presented with recordings of sound events, such as pieces of glass dropping to the floor, or sticks of wood struck together. By analysing the brain images using pattern analysis techniques, the researchers were able to determine whether the participants had listened to sound sources made of wood, glass, or metal, but also how the sound had been produced.

Focusing attention on sound

The study revealed an important insight about auditory processing in the brain. During scanning, the same sounds were played twice to the participants. First, the listeners were asked to report the material of the sound object they had heard. The same sound was then played again, but now the participant was asked to report which action they had heard.

The researchers found that patterns of brain activity in the auditory cortex changed depending on which aspect the sound the participants were paying attention to. In regions of the auditory cortex that have been though to mainly process acoustic features, neural activity adapted to encode the auditory information that was currently relevant to the listener.

Figure 1 Illustration from the paper showing cortical brain regions where information about the sound events could be decoded. The success of decoding was found to depend on what aspects of the sounds the participants paid attention to.

The research identified two key brain regions involved in this adaptive auditory processing: the prefrontal cortex and the auditory cortex. In the paper, the authors suggest that the prefrontal cortex signals a listeners’ current interpretation of a sound stimulus, while the auditory cortex enhances particular acoustic features that are relevant to that interpretation.

Senior Researcher at DRCMR Jens Hjortkjær explains: “Much is known about speech processing in the brain and about what happens when listeners focus attention on a particular talker in a noisy room. But neural mechanisms involved extracting relevant information from sounds are also found in the auditory brains of animals that do not speak. This is one of the reasons why we are focusing on non-speech sounds and on understanding how the brain makes sense of our everyday sound environment.”

The full article can be found here.

Jens Hjortkjær, Tanja Kassuba, Kristoffer H Madsen, Martin Skov, Hartwig R Siebner; Task-Modulated Cortical Representations of Natural Sound Source Categories, Cerebral Cortex, https://doi.org/10.1093/cercor/bhx263

Listen to some of the sound stimuli used in the study: